Welcome to Scarab-Runtime

Scarab-Runtime is an AI-agent-first runtime built on Linux. It provides first-class primitives for agent identity, capability-based security, tool invocation, and lifecycle management, all implemented in userspace using existing Linux kernel primitives.

What is Scarab-Runtime?

Traditional operating systems manage processes. Scarab-Runtime manages agents: long-running, LLM-driven programs that reason, plan, use tools, and communicate with each other. Agents are:

- Isolated by capability tokens, seccomp-BPF, AppArmor profiles, cgroups, and nftables rules

- Audited - every action is written to an append-only, tamper-evident log

- Observable - structured per-agent observation logs capture the full reasoning trace

- Composable - agents can spawn children, communicate over a message bus, and share state via a blackboard

Components

| Component | Binary | Purpose |

|---|---|---|

| agentd | agentd | Core daemon: agent lifecycle, tool dispatch, capability enforcement, audit logging |

| ash | ash | CLI shell for spawning, inspecting, terminating, and configuring agents |

| libagent | (library) | Shared types, manifest parser, IPC protocol, Agent SDK |

| example-agent | example-agent | Reference implementation of the Plan→Act→Observe loop |

Audiences

This documentation is written for two audiences:

- Operators - people who run

agentd, spawn agents, manage secrets, review audit logs, and approve sensitive operations. Start with Getting Started. - Agent developers - Rust programmers writing agent binaries using

libagent. Start with the Developer Guide.

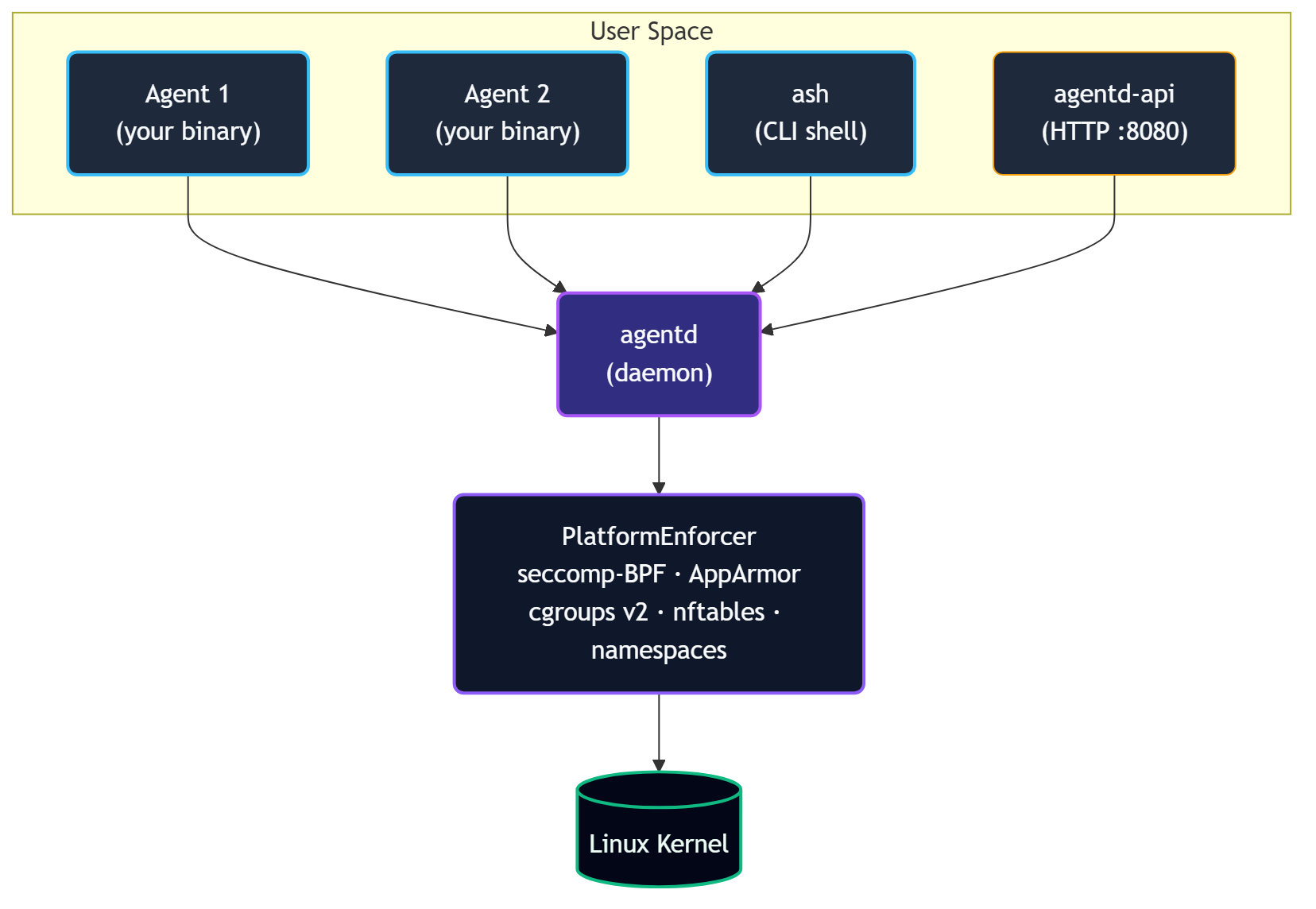

Architecture

Scarab-Runtime uses a userspace-first design. Security enforcement is handled entirely by Linux kernel primitives via a swappable PlatformEnforcer abstraction, with no kernel modules required.

Component Diagram

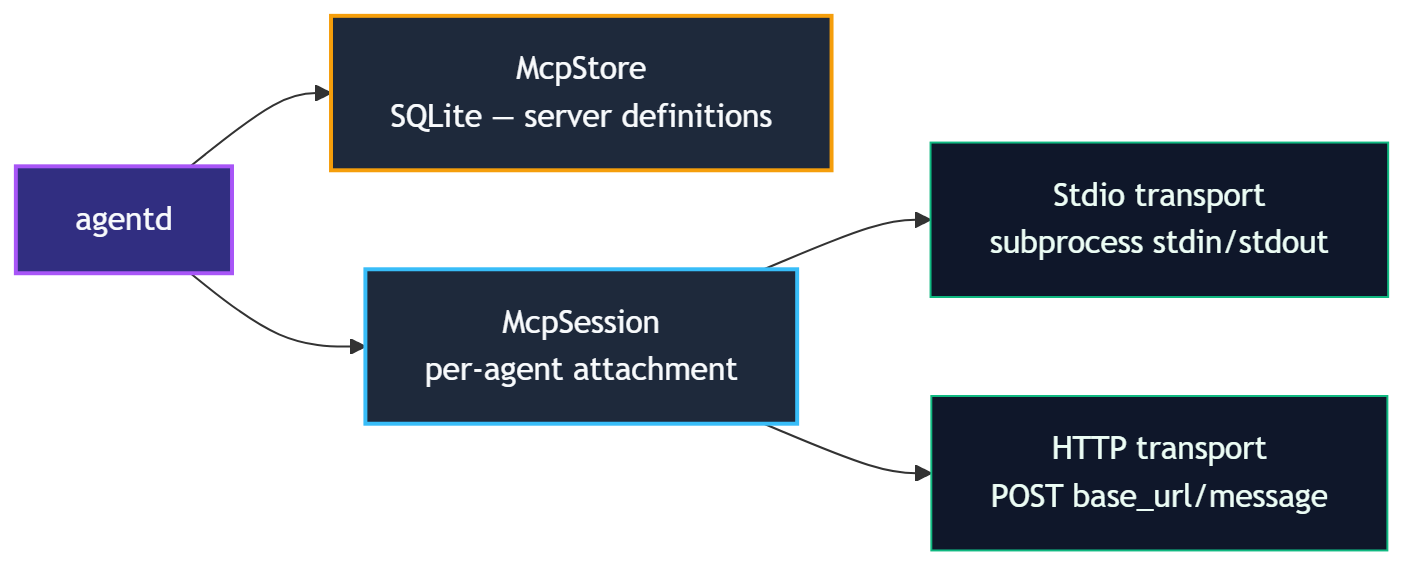

agentd: The Daemon

agentd is the trusted root of the system. It:

- Spawns agent processes from manifest files, injecting

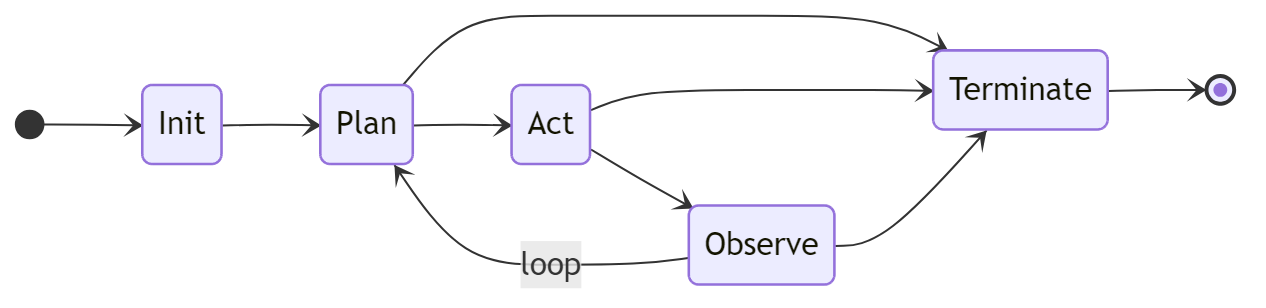

SCARAB_AGENT_IDandSCARAB_SOCKET - Manages the lifecycle state machine (

Init → Plan → Act → Observe → Terminate) - Runs the tool registry and dispatches all tool calls

- Enforces capability checks on every IPC request

- Maintains the audit trail, memory store, observation log, and workspace snapshots

- Runs the message bus and blackboard

- Manages secrets in heap-only sealed storage

- Monitors for behavioral anomalies

- Routes escalations up the agent hierarchy

- Embeds the HTTP API gateway (

agentd-api) on127.0.0.1:8080

agentd is not an agent itself; it is the trusted root that applies security profiles to all agents.

ash: The Shell

ash is a CLI client that communicates with agentd over the same Unix socket agents use. It provides 25+ subcommand groups for every aspect of agent management.

libagent: The Library

libagent is the shared crate used by both agentd and agent binaries:

types.rs-AgentInfo,LifecycleState,TrustLevel,ResourceLimits,PlanStepcapability.rs- capability token parsing and glob matchingmanifest.rs- YAML manifest parsing and validationaudit.rs- hash-chained audit entry typesipc.rs- allRequest/Responseprotocol variantsclient.rs-AgentdClienttyped async IPC clientagent.rs-AgentSDK high-level wrapper

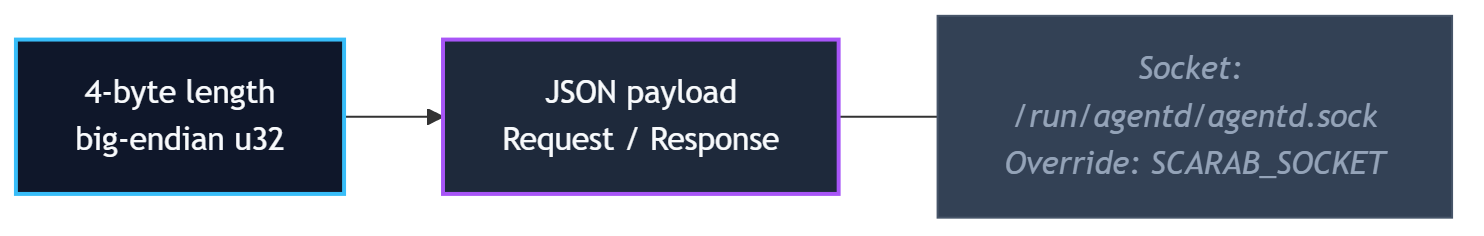

IPC Protocol

All communication between agents/ash and agentd uses JSON over a Unix domain socket with a 4-byte big-endian length prefix:

Default socket path: /run/agentd/agentd.sock

Override with SCARAB_SOCKET environment variable or --socket flag on ash.

Security Layers

Scarab-Runtime employs defense in depth with five independent enforcement layers:

- Tool dispatch: capability check before every tool invocation

- seccomp-BPF: per-agent syscall allowlist derived from manifest at spawn time

- AppArmor: per-agent MAC profile constraining file and network access

- cgroups v2: per-agent resource limits (memory, CPU shares, open files)

- nftables: per-agent network policy (none / local / allowlist / full)

Additionally, each agent runs in its own set of Linux namespaces (PID, network, mount) for process isolation.

Workspace Isolation

Every agent gets an overlayfs workspace. Writes go to an upper layer; the lower layer (base filesystem) is never modified. Snapshots checkpoint the upper layer. Rollback restores a previous snapshot. Commit promotes the upper layer permanently.

Design Principles

These nine principles guide every design decision in Scarab-Runtime.

1. Userspace-First

All security enforcement uses existing Linux kernel primitives: cgroups, seccomp-BPF, AppArmor, nftables, and namespaces. No kernel modules are required. This keeps the implementation portable, auditable, and maintainable. Kernel module migration remains a future option if performance demands it.

2. Capability-Based Security

There are no traditional UNIX permissions. All access is mediated by unforgeable capability tokens in the format domain.action:scope. An agent can only do what its manifest explicitly declares. Glob matching on scopes allows fine-grained path- or name-scoped permissions (e.g., fs.write:/home/agent/workspace/**).

3. Agent-Native

The agent is the first-class primitive, not the process. Every agent has an identity (UUID), a lifecycle state, an audit trail, memory, an observation log, and a workspace. The OS-level process is an implementation detail.

4. Declarative Configuration

Agent behavior is defined by YAML manifests, not imperative startup code. A manifest fully describes what an agent can do: capabilities, resource limits, network policy, allowed syscalls, secret access, and lifecycle parameters. The daemon derives all enforcement artifacts from the manifest at spawn time.

5. Defense in Depth

No single security layer is the whole story. Tool dispatch, seccomp, AppArmor, nftables, and namespaces each enforce independently. A bypass of one layer does not compromise the others.

6. Never Block, Always Gate

Agents are not hard-denied capabilities; out-of-scope actions escalate up the agent hierarchy. The human is the last resort, not the first call. Isolation and reversibility (workspace snapshots, rollback) are the safety net. This keeps human interrupt rate proportional to genuinely novel situations.

7. Kernel is the Authority

Per-agent AppArmor and seccomp profiles are derived from the manifest at spawn time and enforced by the kernel. The runtime enforces what the manifest declares; self-reported capability compliance is an audit aid, not a security boundary. An agent cannot lie to the kernel.

8. Universal Sandbox

Every agent runs in a sandbox regardless of trust level. privileged trust means privileged within the agent world, not write access to the host system (/usr, /lib, /bin, /boot are outside every agent's write scope by definition). agentd itself is not an agent; it is the trusted root that applies profiles to all agents, including the root agent.

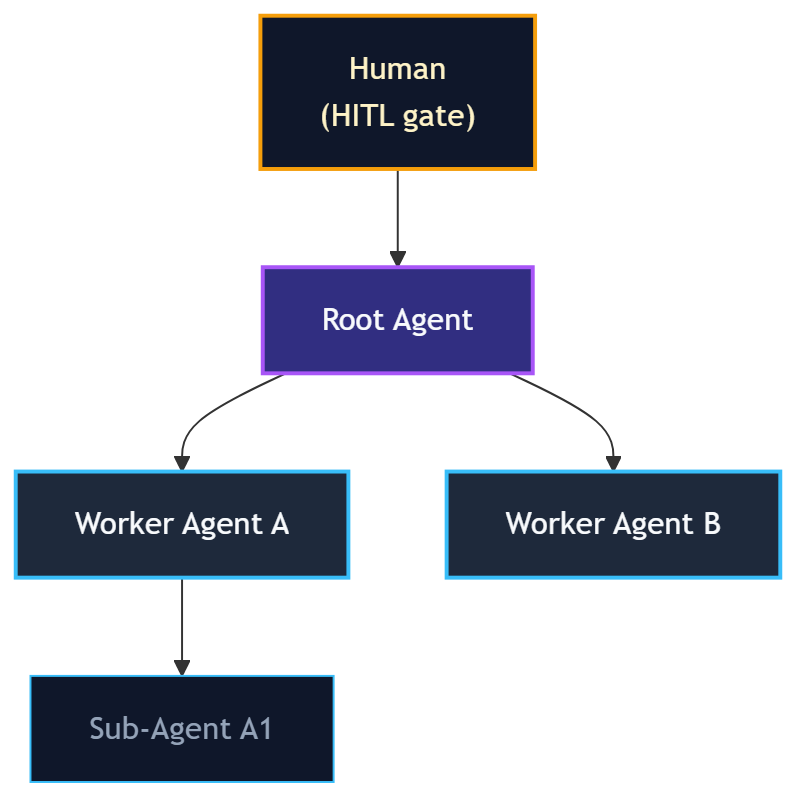

9. Hierarchy Before Human

Escalations (capability grants, anomaly alerts, plan deviations) route to the requesting agent's parent first. Only the root agent (which has no parent) escalates to the human HITL gate. This keeps the human interrupt rate low and ensures escalations are resolved at the most appropriate level in the hierarchy.

Installation

Prerequisites

- Rust stable toolchain - install from rustup.rs

- Linux - required for full functionality (seccomp-BPF, AppArmor, cgroups, nftables, overlayfs)

- Recommended: Windows with WSL 2 (Debian) for development on Windows hosts

Optional tools used by specific features:

python3,node- needed forsandbox.execwith those runtimesmdbook- to build this documentation

Building from Source

# Clone the repository

git clone <repo-url> Scarab-Runtime

cd Scarab-Runtime

# Build all crates

cargo build

# Run all tests (unit + integration)

cargo test

# Run enforcement tests (requires root - validates cgroups, AppArmor, seccomp)

sudo cargo test

Build artifacts are placed in target/debug/:

| Binary | Path |

|---|---|

agentd | target/debug/agentd |

ash | target/debug/ash |

example-agent | target/debug/example-agent |

Release Build

cargo build --release

# Binaries in target/release/

System Directories

agentd expects or creates these directories at runtime:

| Path | Purpose |

|---|---|

/run/agentd/ | Unix socket (agentd.sock) |

/var/lib/scarab-runtime/ | Agent install store, SQLite memory DB |

/var/log/scarab-runtime/ | Audit log, observation logs |

For development, agentd will create these under /tmp/agentd-* if it lacks permission to write to /run/ and /var/.

OpenRouter API Key

The lm.complete and lm.embed tools route LLM requests through OpenRouter. Set your key before starting agentd:

export OPENROUTER_API_KEY=sk-or-...

Or register it as a secret so agents can reference it via handle syntax:

ash secrets add openrouter-key

Starting agentd

agentd is the daemon that manages all agents. It must be running before you can use ash or spawn any agents.

Starting the Daemon

# Development (foreground, with logging)

cargo run --bin agentd

# Or, if you built with --release

./target/release/agentd

# With verbose tracing

RUST_LOG=debug cargo run --bin agentd

agentd listens on a Unix domain socket. Default path: /run/agentd/agentd.sock

To use a custom socket path:

agentd --socket /tmp/my-agentd.sock

Then tell ash to use the same socket:

ash --socket /tmp/my-agentd.sock list

Verifying the Daemon is Running

ash ping

# Output: pong

ash status

# Output: daemon status, agent count, uptime

HTTP API Server

agentd also starts an HTTP API gateway on 127.0.0.1:8080 by default. The gateway exposes all agent management operations over REST, allowing external services and CI pipelines to interact with the daemon without a direct Unix socket connection.

Verify it is running:

curl http://127.0.0.1:8080/health

# {"status":"ok","version":"...","uptime_secs":42}

To change the bind address:

AGENTD_API_ADDR=0.0.0.0:9090 cargo run --bin agentd

See API Gateway for the full HTTP API reference, authentication setup, and CORS configuration.

Stopping the Daemon

Send SIGTERM or SIGINT (Ctrl+C in the foreground). agentd will:

- Transition all running agents to

Terminate - Flush the audit log

- Release all secrets from memory (zeroized)

- Exit cleanly

Log Output

agentd uses structured logging via the tracing crate. Control verbosity with RUST_LOG:

RUST_LOG=info agentd # default: info-level structured events

RUST_LOG=debug agentd # verbose: all tool dispatches, IPC frames

RUST_LOG=trace agentd # very verbose: internal state transitions

Running as a System Service

For production use, run agentd as a systemd service. Example unit file:

[Unit]

Description=Scarab-Runtime Agent Daemon

After=network.target

[Service]

ExecStart=/usr/local/bin/agentd

Restart=on-failure

Environment=RUST_LOG=info

Environment=OPENROUTER_API_KEY=sk-or-...

[Install]

WantedBy=multi-user.target

sudo cp etc/systemd/agentd.service /etc/systemd/system/

sudo systemctl enable --now agentd

Your First Agent

This tutorial walks you through spawning and interacting with a minimal agent.

Step 1: Start agentd

cargo run --bin agentd &

ash ping # should print: pong

Step 2: Write a Manifest

Create my-agent.yaml:

apiVersion: scarab/v1

kind: AgentManifest

metadata:

name: my-first-agent

version: 1.0.0

description: A minimal test agent.

spec:

trust_level: sandboxed

capabilities:

- tool.invoke:echo

- tool.invoke:agent.info

lifecycle:

restart_policy: never

timeout_secs: 60

command: /bin/sh

args:

- -c

- "echo hello from my-first-agent"

Step 3: Validate the Manifest

ash validate my-agent.yaml

# Output: Manifest is valid

Validation is local; no daemon connection is required.

Step 4: Spawn the Agent

ash spawn my-agent.yaml

# Output: Spawned agent <UUID>

Step 5: List Running Agents

ash list

# ID NAME STATE TRUST

# 550e8400-e29b-41d4-a716-446655440000 my-first-agent Plan sandboxed

Step 6: Inspect the Agent

ash info <UUID>

Step 7: Invoke a Tool on the Agent

ash tools invoke <UUID> echo '{"message": "hello"}'

# Output: {"message": "hello"}

Step 8: View the Audit Log

ash audit --agent <UUID>

Step 9: Terminate the Agent

ash kill <UUID>

Running the Example Agent

The example-agent binary demonstrates the full Plan→Act→Observe loop using lm.complete. It requires an OPENROUTER_API_KEY:

export OPENROUTER_API_KEY=sk-or-...

ash spawn etc/agents/example-agent.yaml

ash list

ash audit

The example agent will:

- Transition to

Plan, declare a plan step - Transition to

Act, invokelm.completewith its declared task - Append an observation with the result

- Transition to

Terminate

Next Steps

- Learn about Agent Manifests in depth

- Explore Capability Tokens

- Read the Developer Guide to write your own agent binary

Quick Reference

ash Command Cheat Sheet

Agent Lifecycle

ash spawn <manifest.yaml> # Spawn agent from manifest

ash validate <manifest.yaml> # Validate manifest (no daemon needed)

ash list # List all running agents (alias: ash ls)

ash info <agent-id> # Show agent details

ash kill <agent-id> # Terminate an agent

ash transition <agent-id> <state> # Force lifecycle transition (plan/act/observe/terminate)

ash ping # Check daemon is alive

ash status # Show daemon status

Tools

ash tools list # List all tools

ash tools list --agent <id> # Tools accessible to a specific agent

ash tools invoke <agent-id> <tool> <json> # Invoke a tool

ash tools schema <tool-name> # Show tool input/output schema

ash tools proposed # List pending dynamic tool proposals

ash tools approve <proposal-id> # Approve a tool proposal

ash tools deny <proposal-id> # Deny a tool proposal

Audit & Observations

ash audit # Show last 20 audit entries

ash audit --agent <id> # Filter by agent

ash audit --limit 100 # Show more entries

ash obs query <agent-id> # Query observation log

ash obs query <agent-id> --keyword "error" --limit 10

Secrets

ash secrets add <name> # Register secret (echo-off prompt)

ash secrets list # List secret names (no values)

ash secrets remove <name> # Delete a secret

ash secrets policy add --label "..." --secret "name" --tool "web.fetch"

ash secrets policy list # List policies

ash secrets policy remove <id> # Remove a policy

Memory & Blackboard

ash memory read <agent-id> <key> # Read persistent memory

ash memory write <agent-id> <key> <json> # Write persistent memory

ash memory list <agent-id> # List memory keys

ash bb read <agent-id> <key> # Read blackboard

ash bb write <agent-id> <key> <json> # Write blackboard

ash bb list <agent-id> # List blackboard keys

Message Bus

ash bus publish <agent-id> <topic> <json> # Publish to topic

ash bus subscribe <agent-id> <pattern> # Subscribe to topic pattern

ash bus poll <agent-id> # Drain mailbox

Workspace

ash workspace snapshot <agent-id> # Take snapshot

ash workspace history <agent-id> # List snapshots

ash workspace diff <agent-id> # Show changes vs last snapshot

ash workspace rollback <agent-id> <index> # Roll back

ash workspace commit <agent-id> # Commit overlay

Scheduler

ash scheduler stats # Global scheduler stats

ash scheduler info <agent-id> # Per-agent stats

ash scheduler set-deadline <agent-id> <rfc3339> # Set deadline

ash scheduler clear-deadline <agent-id> # Clear deadline

ash scheduler set-priority <agent-id> <1-100> # Set priority

Hierarchy & Escalations

ash hierarchy show # Render agent tree

ash hierarchy escalations # List pending escalations

ash pending # List pending HITL approval requests

ash approve <request-id> # Approve a pending request

ash deny <request-id> # Deny a pending request

Grants

ash grants list <agent-id> # List capability grants

ash grants revoke <agent-id> <grant-id> # Revoke a grant

Anomaly Detection

ash anomaly list # List recent anomaly events

ash anomaly list --agent <id> # Filter by agent

Replay

ash replay timeline <agent-id> # Show full execution timeline

ash replay timeline <agent-id> --since <t> # Since timestamp (RFC3339)

ash replay rollback <agent-id> <index> # Roll back workspace

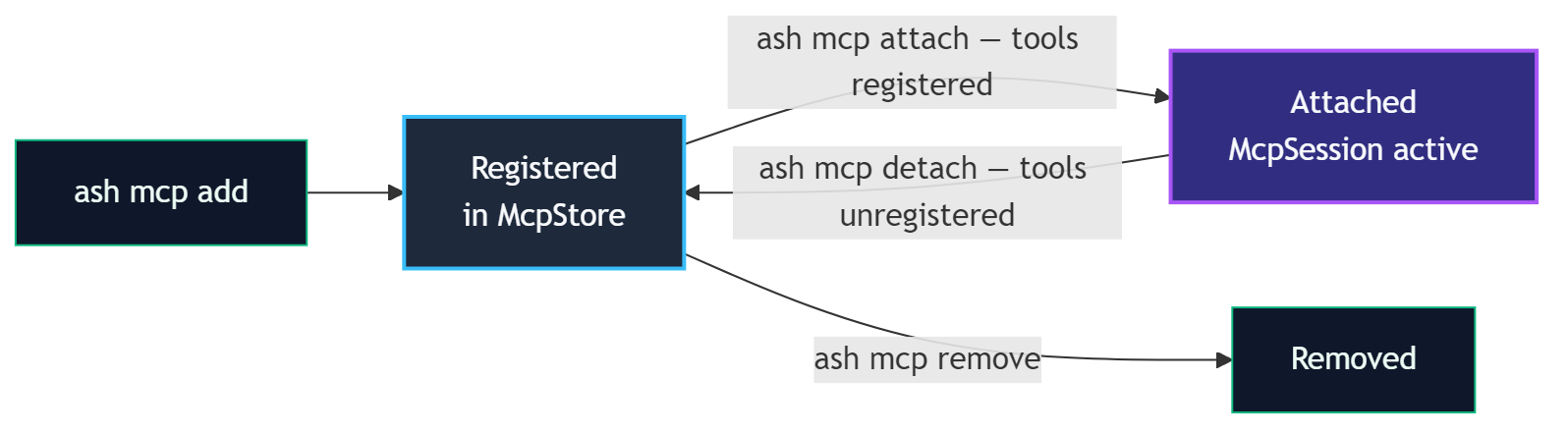

MCP

ash mcp add <name> stdio --command <cmd> # Register stdio MCP server

ash mcp add <name> http --url <url> # Register HTTP MCP server

ash mcp list # List registered servers

ash mcp remove <name> # Remove a server

ash mcp attach <agent-id> <server-name> # Attach server to agent

ash mcp detach <agent-id> <server-name> # Detach server from agent

Agent Store

ash agent install <manifest.yaml> # Install agent

ash agent list # List installed agents

ash agent run <name> # Run installed agent by name

ash agent remove <name> # Remove installed agent

ash agent capability-sheet <manifest> # Print capability sheet

Key Environment Variables

| Variable | Description |

|---|---|

SCARAB_AGENT_ID | UUID assigned to this agent by agentd |

SCARAB_SOCKET | Path to agentd Unix socket |

SCARAB_TASK | Task string from spec.task in manifest |

SCARAB_MODEL | Model ID from spec.model in manifest |

OPENROUTER_API_KEY | API key for LLM tools |

RUST_LOG | Log verbosity (error/warn/info/debug/trace) |

Capability Quick Reference

| Capability | Grants |

|---|---|

tool.invoke:echo | Use the echo tool |

tool.invoke:lm.complete | Use LLM completion |

tool.invoke:fs.read | Read files (scoped by fs.read:<path>) |

tool.invoke:fs.write | Write files (scoped by fs.write:<path>) |

tool.invoke:web.fetch | Fetch URLs |

tool.invoke:web.search | Web search |

tool.invoke:sandbox.exec | Execute code in sandbox |

memory.read:* | Read persistent memory |

memory.write:* | Write persistent memory |

obs.append | Append to observation log |

obs.query | Query observation log |

secret.use:<name> | Reference a named secret |

sandbox.exec | Alias for sandbox.exec tool |

Agents and Lifecycle

What is an Agent?

An agent in Scarab-Runtime is a process spawned and managed by agentd. Unlike ordinary OS processes, every agent has:

- A UUID identity (

SCARAB_AGENT_ID) injected at spawn time - A lifecycle state tracked by the daemon

- A capability set derived from its manifest

- A sandbox enforced at the kernel level

- An audit trail of every action it takes

- A workspace (overlay filesystem) for isolated file operations

- A persistent memory store (SQLite-backed key-value)

- An observation log (hash-chained NDJSON)

Lifecycle State Machine

Every agent follows this state machine:

Terminate is reachable from any state except Init.

State Descriptions

| State | Description |

|---|---|

Init | Agent is being set up. Sandbox, cgroups, and capability profiles are applied. The agent binary has not yet received control. |

Plan | Agent is reasoning. Typically calls agent.plan() to declare steps, may read memory or query observations. |

Act | Agent is executing. Tool invocations are expected in this state. |

Observe | Agent is processing results. Typically calls agent.observe() to record what happened. |

Terminate | Agent is shutting down. Resources are released and the audit log entry is finalized. |

State Transitions

Agents transition by calling agent.transition(state) (via the SDK) or by receiving an IPC Transition request. Transitions are validated:

Init → Planis the first valid transition after spawnPlan ↔ Act ↔ Observeare the normal loop statesTerminatefrom any non-Initstate is always valid- Invalid transitions return an error without changing state

Operators can force a transition with ash transition <agent-id> <state>.

Agent Identity

When agentd spawns an agent, it injects:

SCARAB_AGENT_ID=<uuid> # Agent's unique identifier

SCARAB_SOCKET=/run/agentd/agentd.sock # Path to daemon socket

SCARAB_TASK=<text> # From spec.task (if set)

SCARAB_MODEL=<model-id> # From spec.model (if set)

The agent binary reads these with std::env::var or the Agent::from_env() SDK helper.

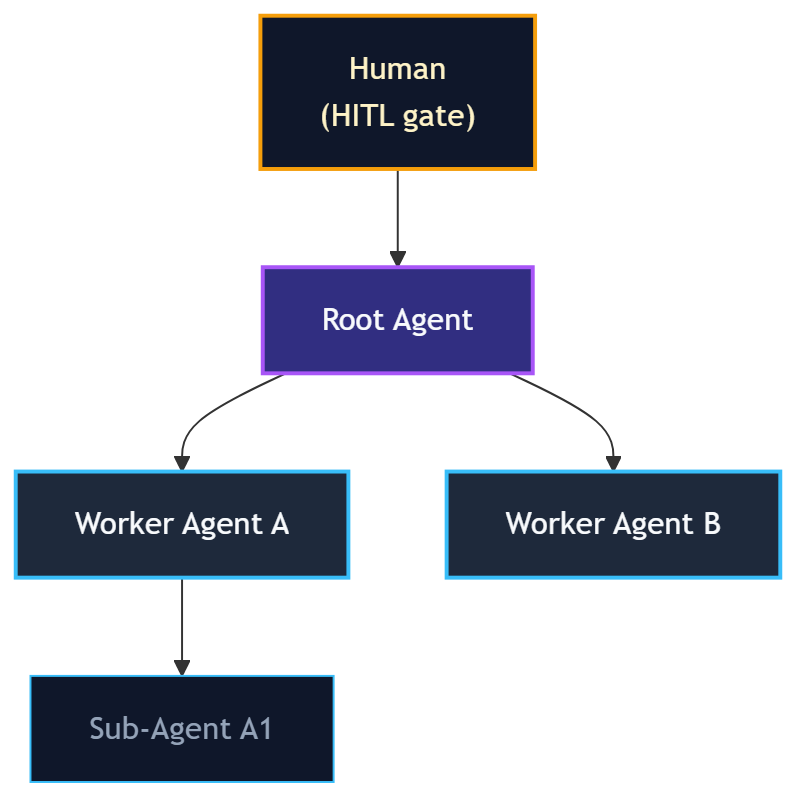

Agent Hierarchy

Agents form a tree. The agent that spawns another becomes its parent. Escalations (capability requests, anomaly alerts) travel up the tree. The root agent escalates to the human HITL gate.

Discover Agents

Agents can discover each other by capability pattern:

ash discover <agent-id> "bus.*"

This finds all running agents that have any bus.* capability.

Agent Manifests

Agent manifests are YAML files that fully declare an agent's identity, capabilities, resource limits, and lifecycle behavior. agentd reads a manifest at spawn time to set up sandboxing, derive AppArmor and seccomp profiles, and enforce capability checks.

Minimal Example

apiVersion: scarab/v1

kind: AgentManifest

metadata:

name: hello-agent

version: 1.0.0

spec:

trust_level: untrusted

capabilities:

- tool.invoke:echo

Full Field Reference

apiVersion: scarab/v1 # Required. Always "scarab/v1".

kind: AgentManifest # Required. Always "AgentManifest".

metadata:

name: <string> # Required. Unique agent name.

version: <semver> # Required. e.g. "1.0.0"

description: <string> # Optional. Human-readable description.

spec:

trust_level: <level> # Required. untrusted|sandboxed|trusted|privileged

task: <string> # Optional. Goal text. Injected as SCARAB_TASK.

model: <model-id> # Optional. LLM model. Injected as SCARAB_MODEL.

resources: # Optional.

memory_limit: <size> # e.g. 512Mi, 2Gi

cpu_shares: <int> # cgroup cpu.shares value

max_open_files: <int> # file descriptor limit

capabilities: # Required. List of capability strings.

- <capability>

network: # Optional.

policy: none|local|allowlist|full

allowlist: # Required if policy is "allowlist"

- <host:port>

lifecycle: # Optional.

restart_policy: never|on-failure|always

max_restarts: <int>

timeout_secs: <int>

command: <path> # Optional. Binary to spawn.

args: # Optional. Arguments passed to the binary.

- <arg>

secret_policy: # Optional. Pre-approval rules for credential access.

- label: <string>

secret_pattern: <glob>

tool_pattern: <glob>

host_pattern: <glob> # Optional

expires_at: <iso8601> # Optional

max_uses: <int> # Optional

agent_matcher: # Optional

type: any|by_id|by_name_glob|by_trust_level

id: <uuid>

pattern: <glob>

level: <trust-level>

# Agent Store / runtime fields (Phase 8.0)

runtime: native|python|node # Execution runtime

entrypoint: <path> # Script entrypoint (for python/node)

packages: # Packages to install for the runtime

- <package-name>

# MCP auto-attach (Phase 8.1)

mcp_servers: # MCP servers to auto-attach at spawn

- <server-name>

# Scheduler fields

workspace: # Workspace configuration

auto_snapshot: <bool> # Enable automatic snapshots (default: true)

snapshot_interval_secs: <int>

Examples

Minimal Sandboxed Agent

apiVersion: scarab/v1

kind: AgentManifest

metadata:

name: file-organizer

version: 1.0.0

spec:

trust_level: sandboxed

capabilities:

- fs.read

- fs.write:/home/agent/workspace/**

- tool.invoke:fs.read

- tool.invoke:fs.write

- tool.invoke:fs.list

network:

policy: none

lifecycle:

restart_policy: on-failure

max_restarts: 3

timeout_secs: 3600

LLM Agent with Task and Model

apiVersion: scarab/v1

kind: AgentManifest

metadata:

name: research-agent

version: 1.0.0

description: Researches topics using web search and LLM.

spec:

task: "Summarize the latest news about renewable energy in 3 bullet points."

model: "anthropic/claude-opus-4-6"

trust_level: trusted

capabilities:

- tool.invoke:lm.complete

- tool.invoke:web.search

- tool.invoke:web.fetch

- memory.read:*

- memory.write:*

- obs.append

network:

policy: full

lifecycle:

restart_policy: never

timeout_secs: 300

command: target/debug/example-agent

Agent Using Secrets

apiVersion: scarab/v1

kind: AgentManifest

metadata:

name: api-caller

version: 1.0.0

spec:

trust_level: trusted

capabilities:

- tool.invoke:web.fetch

- secret.use:my-api-key

network:

policy: allowlist

allowlist:

- "api.example.com:443"

secret_policy:

- label: "API access"

secret_pattern: "my-api-key"

tool_pattern: "web.fetch"

host_pattern: "api.example.com"

Validation

ash validate path/to/manifest.yaml

Validation checks:

- Required fields are present

trust_levelis a valid value- Capabilities are parseable

- Network policy is consistent

apiVersionandkindare correct

Capability Tokens

Capability tokens are the primary access-control mechanism in Scarab-Runtime. An agent can only invoke tools, read/write memory, or access secrets that are listed in its manifest capabilities.

Token Format

<domain>.<action>

<domain>.<action>:<scope>

Examples:

fs.read

fs.write:/home/agent/workspace/**

tool.invoke:echo

tool.invoke:*

net.connect:api.example.com:443

secret.use:my-api-key

secret.use:db-*

memory.read:config

memory.write:*

obs.append

obs.query

sandbox.exec

Glob Matching on Scopes

The :<scope> portion supports glob matching:

| Pattern | Matches |

|---|---|

fs.read | Read any file (no scope restriction) |

fs.write:/home/agent/** | Write files anywhere under /home/agent/ |

tool.invoke:echo | Invoke only the echo tool |

tool.invoke:fs.* | Invoke any tool in the fs namespace |

tool.invoke:* | Invoke any tool |

secret.use:openai-* | Use any secret whose name starts with openai- |

net.connect:*.example.com:443 | Connect to any subdomain of example.com on port 443 |

Glob rules:

*matches any single path segment (no/)**matches zero or more path segments (including/)

Capability Domains

tool.invoke

tool.invoke:<tool-name>

Grants permission to invoke a named tool. Without this, the tool registry will reject the call.

fs

fs.read:<path-glob>

fs.write:<path-glob>

Used by filesystem tools to validate the requested path against the agent's allowed scopes. If an agent has tool.invoke:fs.read but not fs.read:/etc/**, it cannot read /etc/passwd.

Note: tool.invoke:fs.read and fs.read are complementary; the tool dispatch layer checks tool.invoke:fs.read, while the fs.read tool handler additionally checks fs.read:<path>.

memory

memory.read:<key-pattern>

memory.write:<key-pattern>

Scoped to key patterns. memory.read:* allows reading any key. memory.read:config allows only the config key.

secret.use

secret.use:<secret-name-glob>

Declares which secrets the agent may reference in tool arguments using the {{secret:<name>}} handle syntax.

obs

obs.append

obs.query

obs.append: write to this agent's observation log.

obs.query: read observation logs (own or other agents').

sandbox.exec

sandbox.exec

Allows use of the sandbox.exec tool to execute code in a throwaway namespace sandbox.

net.connect

net.connect:<host>:<port>

Low-level network connection permission (enforced by nftables). Higher-level network policy (spec.network) is the simpler interface for most use cases.

agent.discover

agent.discover

Allows querying the agent discovery service to find other agents by capability pattern.

Capability Enforcement

Every IPC request to agentd that involves a tool invocation goes through this check:

- Is the tool in the registry? (

ToolError::NotFound) - Does the agent have the required capability for this tool? (

ToolError::AccessDenied) - Does the tool require human approval? If so, queue it and return

RequiresApproval. - Call the tool handler. The handler may perform additional scope checks (e.g.,

fs.writevalidates the path againstfs.write:*capabilities).

Capability Sets in the Manifest

The spec.capabilities list is parsed into a CapabilitySet at spawn time. The set is stored in the agent's state and injected into every ToolContext when a tool is dispatched.

spec:

capabilities:

- tool.invoke:lm.complete

- tool.invoke:web.fetch

- tool.invoke:fs.read

- fs.read:/home/agent/**

- memory.read:*

- memory.write:notes

- obs.append

- secret.use:openai-key

Trust Levels

Trust levels define the tier of privilege an agent operates at. They form a strict ordering:

untrusted < sandboxed < trusted < privileged

The trust level determines:

- Which capabilities can be declared in the manifest

- Which kernel enforcement profiles are applied

- What resources the agent can access by default

Levels

untrusted

Maximum isolation. Reserved for agents that should have no meaningful access to the system, such as untrusted third-party code, test agents, or proofs of concept.

Default capabilities: None (must be explicitly listed)

Typical use: tool.invoke:echo only

spec:

trust_level: untrusted

capabilities:

- tool.invoke:echo

sandboxed

Standard level for most agents. Has access to a curated set of tools and can read/write within declared path scopes. Cannot access host-level resources.

Typical capabilities: fs.read, fs.write:<path>, tool.invoke:*, memory.read:*, obs.append

spec:

trust_level: sandboxed

capabilities:

- tool.invoke:lm.complete

- tool.invoke:fs.read

- fs.read:/home/agent/**

- memory.read:*

- memory.write:*

- obs.append

trusted

Broader access. Can write files to wider path scopes, access local network, use secrets, and spawn child agents.

Typical capabilities: All sandboxed capabilities plus fs.write:<wide-path>, net.local, secret.use:* (subject to policy)

spec:

trust_level: trusted

capabilities:

- tool.invoke:lm.complete

- tool.invoke:web.fetch

- tool.invoke:web.search

- tool.invoke:fs.read

- tool.invoke:fs.write

- fs.write:/home/agent/research/**

- secret.use:openrouter-key

- obs.append

privileged

Full access within the agent world. Reserved for system-level agents (e.g. the root orchestrator). privileged does not mean host root access; the runtime system directories (/usr, /lib, /bin) are always outside every agent's write scope.

Note: Use privileged sparingly. Most agent workloads should use sandboxed or trusted.

spec:

trust_level: privileged

capabilities:

- "*.*"

Enforcement

Trust levels affect the kernel profiles derived at spawn time:

untrusted: strictest seccomp allowlist, most restrictive AppArmor profile, lowest cgroup cpu.sharessandboxed: standard seccomp allowlist, AppArmor profile for workspace access onlytrusted: expanded seccomp, AppArmor allows broader network and file accessprivileged: minimal restrictions, but still isolated from host system paths

Capability Escalation

An agent cannot declare capabilities that exceed its trust level. The daemon validates capability declarations at spawn time. If an agent at sandboxed trust level attempts to declare a capability reserved for trusted agents, the manifest is rejected.

Runtime capability grants (via ash grants or the escalation hierarchy) can temporarily extend an agent's capabilities within the bounds of its trust level.

Audit Trail

Every action taken by every agent is recorded in an append-only, tamper-evident audit log. The audit trail is the definitive record of what happened in the system.

Properties

- Append-only - entries are never deleted or modified

- Hash-chained - each entry includes the SHA-256 hash of the previous entry, making tampering detectable

- Ring buffer - the daemon stores the most recent N entries in memory; older entries are written to disk

- Queryable - filter by agent ID, time range, or action type

Entry Structure

Each audit entry contains:

{

"id": "<uuid>",

"timestamp": "2026-02-22T12:34:56.789Z",

"agent_id": "<uuid>",

"agent_name": "my-agent",

"action": "tool_invoked",

"detail": "echo({\"message\": \"hello\"})",

"outcome": "success",

"prev_hash": "<sha256-hex>",

"hash": "<sha256-hex>"

}

The hash field is SHA-256(prev_hash + timestamp + agent_id + action + detail + outcome). Verifying the chain means checking that each entry's hash matches its declared inputs and that each prev_hash matches the previous entry's hash.

Querying the Audit Log

# Last 20 entries (all agents)

ash audit

# Filter by agent

ash audit --agent <uuid>

# Show more entries

ash audit --limit 100

What Gets Audited

Every IPC request handled by agentd that results in an action produces an audit entry. This includes:

| Action | Audit Entry |

|---|---|

| Agent spawned | agent_spawned |

| Lifecycle transition | state_transition |

| Tool invoked (success) | tool_invoked |

| Tool denied (capability) | access_denied |

| Tool queued for approval | approval_requested |

| Tool approved/denied | approval_resolved |

| Memory read/write | memory_access |

| Observation appended | observation_appended |

| Workspace snapshot | workspace_snapshot |

| Secret used | secret_used |

| Anomaly detected | anomaly_detected |

| Capability grant issued | capability_granted |

| Capability grant revoked | capability_revoked |

| Plan declared | plan_declared |

| Plan revised | plan_revised |

| Agent terminated | agent_terminated |

Anomaly Detection

The audit trail feeds the behavioral anomaly detector (agentd/src/anomaly.rs). The detector runs four rules:

- Volume spike: unusually high number of tool invocations in a short window

- Scope creep: repeated access denials suggesting capability probing

- Repeated kernel denials: seccomp/AppArmor denials indicating containment pressure

- Secret probe / canary leak: attempts to access undeclared secrets, or canary token appearing in a tool result

When an anomaly is detected, an anomaly_detected audit entry is written and an escalation message is sent up the agent hierarchy.

Tamper Detection

To verify the audit chain:

# (planned: ash audit verify)

# For now, query entries and verify hashes manually

ash audit --limit 1000

If any entry's hash does not match SHA-256(prev_hash + fields), the chain has been tampered with.

Managing Agents

This page covers the day-to-day operations of spawning, inspecting, and terminating agents.

Spawning an Agent

ash spawn path/to/manifest.yaml

# Output: Spawned agent 550e8400-e29b-41d4-a716-446655440000

agentd will:

- Parse and validate the manifest

- Create an overlayfs workspace for the agent

- Derive AppArmor and seccomp profiles from the manifest

- Set up cgroups for resource limits

- Apply nftables rules for network policy

- Spawn the agent binary with

SCARAB_AGENT_IDandSCARAB_SOCKETinjected - Transition the agent to

Initstate, thenPlan

Listing Agents

ash list

# or

ash ls

Output:

ID NAME STATE TRUST UPTIME

550e8400-e29b-41d4-a716-446655440000 my-agent Plan sandboxed 0:01:23

6ba7b810-9dad-11d1-80b4-00c04fd430c8 worker Act trusted 0:00:05

Inspecting an Agent

ash info <agent-id>

Shows:

- Agent ID, name, version, description

- Trust level and lifecycle state

- Capabilities list

- Resource limits

- Network policy

- Uptime and spawn timestamp

Terminating an Agent

ash kill <agent-id>

The daemon transitions the agent to Terminate, waits for it to exit gracefully, then cleans up its workspace and releases cgroup resources.

To force-terminate (SIGKILL after timeout):

# (if the agent hangs, the daemon's timeout_secs will force-kill it)

Forcing a State Transition

Administrators can override an agent's lifecycle state:

ash transition <agent-id> plan

ash transition <agent-id> act

ash transition <agent-id> observe

ash transition <agent-id> terminate

Use with care; forcing a transition without the agent's cooperation may leave the agent in an inconsistent state.

Validating a Manifest

Validation runs locally without connecting to the daemon:

ash validate manifest.yaml

# Manifest is valid

# or

# Error: missing required field 'spec.trust_level'

Monitoring Agent State

For production monitoring, watch the daemon's structured log output:

RUST_LOG=info agentd 2>&1 | grep agent_id=<uuid>

Or query the audit log periodically:

ash audit --agent <uuid> --limit 5

Daemon Status

ash status

Shows: daemon version, socket path, number of running agents, uptime, and resource usage summary.

Handling Agent Crashes

If an agent process exits unexpectedly:

restart_policy: on-failurewill restart it (up tomax_restartstimes)restart_policy: never(default) leaves it terminated- A

process_exitedaudit entry is written with the exit code - Anomaly detection may fire if the crash pattern is unusual

Human-in-the-Loop Approvals

Scarab-Runtime supports human-in-the-loop (HITL) gating for sensitive tool invocations. When an agent invokes a tool that requires approval, the request is queued and the agent blocks until the operator approves or denies it.

How it Works

- Agent calls a tool marked

requires_approval: true(e.g.sensitive-op) agentdqueues the request and returns aRequiresApprovalresponse to the agent- The agent waits (blocking on IPC)

- The operator sees the pending request with

ash pending - The operator approves or denies it

- The agent's blocked call returns with the result or a denial error

Viewing Pending Requests

ash pending

Output:

REQUEST-ID AGENT TOOL CREATED

a1b2c3d4-... my-agent sensitive-op 2026-02-22T12:34:56Z

Approving a Request

ash approve <request-id>

# With an operator token (for audit trail)

ash approve <request-id> --operator "alice"

Denying a Request

ash deny <request-id>

# With an operator token

ash deny <request-id> --operator "alice"

When denied, the agent receives a ToolFailed("denied by operator") error from invoke_tool().

Configuring Approval Timeouts

Each tool can declare an approval_timeout_secs. If the operator does not respond within the timeout:

- The request is automatically denied

- The agent receives a timeout error

- An audit entry is written

The sensitive-op built-in tool has a 300-second (5 minute) timeout.

Custom Approval-Required Tools

When registering a dynamic tool (or in a future tool plugin), set requires_approval: true in the ToolInfo:

#![allow(unused)] fn main() { ToolInfo { name: "deploy-to-production".to_string(), requires_approval: true, approval_timeout_secs: Some(600), // 10 minutes // ... } }

Escalation Hierarchy

HITL approvals that are not handled by an operator escalate up the agent hierarchy. A parent agent may be configured to auto-approve or auto-deny certain request types, filtering the interrupt load before it reaches the human.

See Hierarchy and Escalations for details.

Workspace Snapshots

Every agent runs in an overlayfs workspace, a copy-on-write filesystem view. All writes go to the agent's upper layer; the lower layer (base system) is never modified. Snapshots checkpoint the upper layer, enabling rollback if an agent makes unwanted changes.

How Overlayfs Works

Base filesystem (lower, read-only)

+

Agent's upper layer (read-write)

=

Agent's merged view (what the agent sees)

When the agent writes a file, the write goes to the upper layer only. The base filesystem is untouched.

Taking a Snapshot

ash workspace snapshot <agent-id>

# Output: Snapshot 3 taken for agent <uuid>

Snapshots can also be triggered automatically. Set in the manifest:

spec:

workspace:

auto_snapshot: true

snapshot_interval_secs: 300 # every 5 minutes

Listing Snapshots

ash workspace history <agent-id>

Output:

INDEX CREATED SIZE

0 2026-02-22T12:00:00Z 1.2 MB

1 2026-02-22T12:05:00Z 1.8 MB

2 2026-02-22T12:10:00Z 2.1 MB

Index 0 is the oldest, highest index is the most recent.

Viewing Changes

ash workspace diff <agent-id>

Shows files changed in the current overlay vs the last snapshot.

Rolling Back

ash workspace rollback <agent-id> <index>

# e.g.

ash workspace rollback abc-123 1

Rolls back the agent's upper layer to the state at snapshot index 1. The agent continues running with the rolled-back view.

Committing

ash workspace commit <agent-id>

Promotes the current overlay to a permanent snapshot and clears the upper layer. Use this when you want to "bake in" the agent's changes as a new baseline.

Replay Integration

The replay debugger uses workspace snapshots to reconstruct an agent's state at any point in time. See Replay Debugger.

Storage

Workspace data is stored under the agent's working directory, managed by agentd. On cleanup (agent terminate), the upper layer is optionally preserved or discarded based on configuration.

Persistent Memory

Agents have access to a per-agent persistent key-value memory store backed by SQLite. Memory survives agent restarts and daemon restarts. It is independent of the workspace filesystem.

Overview

- Scope: Per-agent. Each agent has its own isolated namespace.

- Backend: SQLite database managed by

agentd - Persistence: Survives agent and daemon restarts

- Versioning: Every key has a version number, enabling optimistic compare-and-swap

- TTL: Optional per-entry time-to-live

Reading a Value

Via SDK:

#![allow(unused)] fn main() { let value = agent.memory_get("config").await?; }

Via ash:

ash memory read <agent-id> config

# Output: {"theme": "dark", "max_retries": 3}

Writing a Value

Via SDK:

#![allow(unused)] fn main() { agent.memory_set("config", json!({"theme": "dark"})).await?; }

Via ash:

ash memory write <agent-id> config '{"theme": "dark"}'

# With TTL (expires after 3600 seconds)

ash memory write <agent-id> session-token '"abc123"' --ttl 3600

Compare-and-Swap

For safe concurrent updates, use CAS:

ash memory cas <agent-id> counter 2 '"new-value"'

# Expected version = 2; if current version != 2, the write is rejected

Via IPC request:

{

"MemoryWriteCas": {

"agent_id": "<uuid>",

"key": "counter",

"expected_version": 2,

"value": 42,

"ttl_secs": null

}

}

Deleting a Key

ash memory delete <agent-id> session-token

Listing Keys

ash memory list <agent-id>

# With glob filter

ash memory list <agent-id> --pattern "cache.*"

Capabilities Required

spec:

capabilities:

- memory.read:* # Read any key

- memory.write:* # Write any key

- memory.read:config # Read only the "config" key

- memory.write:notes # Write only the "notes" key

Difference from Blackboard

| Feature | Persistent Memory | Blackboard |

|---|---|---|

| Scope | Per-agent | Shared (all agents) |

| Persistence | SQLite, survives restarts | In-memory (lost on restart) |

| TTL support | Yes | Yes |

| CAS | Yes (version-based) | Yes (value-based) |

| Access control | memory.read/write capabilities | bb.read/write capabilities |

Observation Logs

The observation log is a per-agent, hash-chained, append-only NDJSON log that records the agent's reasoning trace: what it did and what it learned. Unlike the audit trail (which records security events at the daemon level), observations are agent-authored records of intent and outcome.

What is an Observation?

An observation is a structured record written by the agent to document a step in its reasoning:

{

"id": "<uuid>",

"timestamp": "2026-02-22T12:34:56Z",

"agent_id": "<uuid>",

"action": "searched_web",

"result": "Found 5 results about renewable energy",

"tags": ["research", "web-search"],

"prev_hash": "<sha256>",

"hash": "<sha256>"

}

Like the audit trail, observations are hash-chained for tamper detection.

Writing an Observation

Via SDK:

#![allow(unused)] fn main() { agent.observe( "searched_web", "Found 5 results about renewable energy", vec!["research".to_string(), "web-search".to_string()], ).await?; }

Via IPC directly:

{

"ObservationAppend": {

"agent_id": "<uuid>",

"action": "completed_task",

"result": "Summary written to /workspace/report.md",

"tags": ["output", "success"],

"metadata": null

}

}

Querying Observations

# All observations for an agent

ash obs query <agent-id>

# With filters

ash obs query <agent-id> --keyword "error" --limit 10

ash obs query <agent-id> --since "2026-02-22T12:00:00Z"

ash obs query <agent-id> --until "2026-02-22T13:00:00Z"

# Query another agent's log (requires obs.query capability)

ash obs query <my-agent-id> --target <other-agent-id>

Capabilities Required

spec:

capabilities:

- obs.append # Write observations

- obs.query # Read observations (own and others)

Storage

Observations are stored as NDJSON (newline-delimited JSON) files on disk, one file per agent. The hash chain links entries, making any post-hoc modification detectable.

Use Cases

- Debugging - understand what the agent was doing before a failure

- Replay - combined with workspace snapshots, reconstruct the agent's full reasoning trace

- Audit - agent-authored record complementing the daemon-level audit trail

- Training data - high-quality reasoning traces for fine-tuning

Message Bus

The message bus provides typed pub/sub messaging between agents. Agents publish messages to topics; other agents subscribe to topic patterns and poll for messages.

Topics

Topics are dot-separated strings, e.g.:

tasks.newresults.agent-abcscarab.escalation.<agent-id>(reserved for escalations)

Publishing

Via ash:

ash bus publish <agent-id> tasks.new '{"task": "summarize /workspace/report.md"}'

Via SDK (using the underlying IPC client):

#![allow(unused)] fn main() { agent.client.bus_publish(agent.id, "tasks.new", json!({"task": "..."})).await?; }

Subscribing

ash bus subscribe <agent-id> "tasks.*"

Subscriptions use glob patterns. tasks.* matches tasks.new, tasks.urgent, etc.

Polling

ash bus poll <agent-id>

# Drains and prints all pending messages

Via SDK:

#![allow(unused)] fn main() { // Poll for escalations specifically let escalations = agent.pending_escalations().await?; // Poll the bus directly match client.bus_poll(agent.id).await? { Response::Messages { messages } => { /* process */ } _ => {} } }

Unsubscribing

ash bus unsubscribe <agent-id> "tasks.*"

Escalation Topic

The escalation system uses the bus with reserved topics:

scarab.escalation.<target-agent-id>

When the anomaly detector or hierarchy escalation fires, it publishes a message to the parent agent's escalation topic. The Agent::pending_escalations() SDK method filters bus messages for these topics.

Capabilities Required

spec:

capabilities:

- tool.invoke:bus.publish # (future: explicit bus capability)

- tool.invoke:bus.subscribe

- tool.invoke:bus.poll

Currently bus operations are gated by trust level and the general IPC dispatch.

Blackboard

The blackboard is a shared, in-memory key-value store accessible by all agents. It is intended for coordination data that multiple agents need to read and write concurrently.

Unlike Persistent Memory, the blackboard is:

- Shared across all agents (not per-agent)

- In-memory (lost when

agentdrestarts) - Supports TTL for ephemeral coordination state

Reading

ash bb read <agent-id> my-key

The <agent-id> is the agent making the request (used for capability checks and audit).

Writing

ash bb write <agent-id> my-key '{"status": "ready"}'

# With TTL (expires after 60 seconds)

ash bb write <agent-id> lock '{"holder": "agent-abc"}' --ttl 60

Compare-and-Swap

For safe concurrent coordination:

ash bb cas <agent-id> lock '{"holder": "agent-abc"}' '{"holder": "agent-xyz"}'

CAS succeeds only if the current value equals expected. If the key doesn't exist, use null as the expected value:

ash bb cas <agent-id> lock null '{"holder": "agent-xyz"}'

Deleting

ash bb delete <agent-id> my-key

Listing Keys

ash bb list <agent-id>

# With glob filter

ash bb list <agent-id> --pattern "task.*"

Use Cases

- Work queue coordination - agents claim items from a shared task list using CAS

- Distributed locking - agents acquire a lock via CAS before entering a critical section

- Status broadcasting - one agent writes its status; others read it without direct messaging

- Configuration sharing - a root agent writes config; worker agents read it

Example: Distributed Lock

# Agent A tries to acquire a lock

ash bb cas agent-a lock null '"agent-a"'

# If success (lock was unclaimed), agent-a proceeds

# Agent B tries to acquire the same lock

ash bb cas agent-b lock null '"agent-b"'

# Fails if agent-a holds it (current value is not null)

# Agent A releases the lock

ash bb cas agent-a lock '"agent-a"' null

Scheduler

Scarab-Runtime includes a cost-aware and deadline-aware scheduler that manages agent priorities and enforces per-agent budgets.

Overview

The scheduler tracks:

- Token cost accumulated by each agent (from

lm.completeand other cost-bearing tools) - Deadlines set by operators or agents

- Priority (1–100, higher = higher priority)

- Budget (maximum allowed cost before the agent is paused or terminated)

Viewing Stats

# Global stats: tool cost totals + all agent summaries

ash scheduler stats

# Per-agent info

ash scheduler info <agent-id>

Output includes: current priority, cost accumulated, deadline (if set), and budget usage.

Setting a Deadline

ash scheduler set-deadline <agent-id> 2026-12-31T00:00:00Z

Deadlines must be in RFC3339 format. The scheduler boosts the priority of agents approaching their deadline automatically.

Clearing a Deadline

ash scheduler clear-deadline <agent-id>

Setting Priority

ash scheduler set-priority <agent-id> 80

Priority range: 1–100. Default: 50. Higher priority agents get preferential tool dispatch when the system is under load.

Budget Enforcement

Declare a cost budget in the manifest:

spec:

resources:

# (budget is declared via scheduler config, not manifest resources directly)

When an agent exceeds its budget, the scheduler emits an anomaly event and optionally pauses the agent pending operator review.

Deadline Priority Boost

The scheduler continuously monitors deadlines. As an agent's deadline approaches:

- >1h remaining: normal priority

- 30min–1h: priority boosted by 10

- <30min: priority boosted by 25

- Past deadline: anomaly event generated; agent priority set to maximum

User-declared deadlines take priority over agent-declared deadlines.

Tool Cost Tracking

Each built-in tool has an estimated_cost field (fractional units). The scheduler accumulates cost per agent and per tool type. Use ash scheduler stats to see cost breakdowns.

| Tool | Estimated Cost |

|---|---|

echo | 0.1 |

fs.read | 0.1 |

fs.write | 0.2 |

web.fetch | 0.1 |

web.search | 0.5 |

lm.complete | 1.0 (plus actual token cost) |

lm.embed | 0.1 |

sandbox.exec | 1.0 |

sensitive-op | 5.0 |

Hierarchy and Escalations

Agents form a parent-child hierarchy tree. Escalations travel up this tree before reaching the human HITL gate, keeping human interrupt rates low.

The Hierarchy Tree

When agent A spawns agent B, A becomes B's parent. The root agent has no parent and escalates directly to the human.

agentd tracks this tree. View it with:

ash hierarchy show

Escalation Flow

When an agent encounters a situation requiring escalation (capability request beyond its manifest, anomaly alert, plan deviation requiring human review):

- The escalation is sent to the agent's parent via the bus topic

scarab.escalation.<parent-id> - The parent agent receives the escalation via

agent.pending_escalations() - The parent can auto-resolve it (approve, deny, or reroute) or escalate further up

- Only the root agent escalates to the human HITL gate

Viewing Pending Escalations

ash hierarchy escalations

Lists agents with unresolved escalations in their mailbox.

Polling Escalations (SDK)

#![allow(unused)] fn main() { let escalations = agent.pending_escalations().await?; for esc in escalations { println!("Escalation: {}", esc); // Decide: auto-resolve or escalate to parent } }

Types of Escalations

| Type | Trigger |

|---|---|

| Capability request | Agent attempts action outside its manifest |

| Anomaly alert | Anomaly detector fires (see Anomaly Detection) |

| Plan deviation | Agent deviates from declared plan (Strict mode) |

| HITL approval | A tool requires human approval and no parent handles it |

| Budget exceeded | Agent exceeds its cost budget |

Capability Grants via Escalation

When an agent needs a capability not in its manifest, it can request a runtime grant:

- Agent's tool call fails with

AccessDenied - Agent (or its SDK) sends a grant request

- Grant request escalates up the hierarchy

- Parent or human approves the grant

agentdissues a temporaryCapabilityGrantfor that agent

See Capability Grants for operator details.

Anomaly Detection

The anomaly detector is a background watchdog that continuously monitors the audit trail for behavioral patterns that indicate a compromised, malfunctioning, or misbehaving agent.

Detection Rules

Four rules are currently implemented:

1. Volume Spike

Detects an unusually high number of tool invocations within a short time window for a single agent.

Trigger: More than N tool invocations in M seconds (configurable thresholds)

Response: volume_spike anomaly event, escalation to parent

2. Scope Creep

Detects repeated access-denied errors for a single agent, which may indicate capability probing or a prompt-injection attack attempting to discover what the agent can access.

Trigger: More than N access_denied audit entries in a short window

Response: scope_creep anomaly event, escalation to parent

3. Repeated Kernel Denials

Detects seccomp-BPF or AppArmor denials appearing in the audit trail, indicating an agent is pushing against its kernel-level sandbox.

Trigger: More than N kernel denial events

Response: kernel_denial_spike anomaly event, may trigger agent pause

4. Secret Probe / Canary Leak

Two sub-rules:

- Secret probe: Agent attempts to use a secret it hasn't declared (

secret.use:<name>not in capabilities) - Canary leak: A canary token (a fake secret value embedded in the store) appears in a tool result output

Trigger: secret_probe (attempt to resolve undeclared secret); canary_leak (canary value in scrubbed output)

Response: Immediate escalation, potential agent termination

Viewing Anomaly Events

# Recent events (all agents)

ash anomaly list

# Filter by agent

ash anomaly list --agent <uuid>

# Show more

ash anomaly list --limit 50

Output:

TIMESTAMP AGENT RULE DETAIL

2026-02-22T12:34:56Z my-agent scope_creep 5 denied calls in 30s

2026-02-22T12:35:01Z my-agent secret_probe attempted secret: db-password

Anomaly Escalation

When an anomaly fires:

- An

anomaly_detectedentry is written to the audit trail - An escalation message is published to

scarab.escalation.<parent-agent-id> - The parent agent receives it via

pending_escalations() - If unhandled, it bubbles to the root agent and then to the human

Canary Tokens

agentd embeds canary tokens in the secret store. These are fake secret values that are never legitimately used. If a canary value appears in a tool result (e.g., it leaked via a tool output), it indicates the secret scrubber may have been bypassed, or a tool is exfiltrating data.

Canary tokens are rotated periodically. Their values are never logged or shown to operators.

Replay Debugger

The replay debugger lets you reconstruct an agent's execution timeline from its audit log entries and workspace snapshots. It is useful for post-incident analysis and debugging complex multi-step agent failures.

Viewing the Timeline

ash replay timeline <agent-id>

Outputs a merged chronological timeline of:

- Lifecycle state transitions

- Tool invocations (with inputs and outputs)

- Observation log entries

- Workspace snapshot events

- Anomaly events

- Audit entries

2026-02-22T12:00:00Z [Init] agent spawned

2026-02-22T12:00:01Z [Plan] plan declared: ["search web", "summarize", "write report"]

2026-02-22T12:00:02Z [Act] web.search({"query": "renewable energy 2026"}) → 5 results

2026-02-22T12:00:03Z [Act] lm.complete({"prompt": "Summarize..."}) → 300 tokens

2026-02-22T12:00:05Z [Obs] observed: "summary generated"

2026-02-22T12:00:06Z [Act] fs.write({"path": "/workspace/report.md"}) → 1.2KB written

2026-02-22T12:00:07Z [Snapshot] workspace snapshot 1 taken

2026-02-22T12:00:08Z [Terminate] agent exited cleanly

Filtering by Time Range

ash replay timeline <agent-id> --since 2026-02-22T12:00:00Z

ash replay timeline <agent-id> --until 2026-02-22T12:00:05Z

ash replay timeline <agent-id> --since 2026-02-22T12:00:00Z --until 2026-02-22T12:00:05Z

Rolling Back Workspace

To restore the agent's workspace to a previous snapshot for inspection:

ash replay rollback <agent-id> <snapshot-index>

This is identical to ash workspace rollback but surfaced in the replay subcommand for convenience.

Combining with Observation Logs

For the full picture, combine the replay timeline with the observation log:

# Timeline

ash replay timeline <agent-id>

# Detailed observations

ash obs query <agent-id> --limit 100

Use Cases

- Post-incident analysis - understand exactly what an agent did before it failed or was terminated

- Debugging prompt injection - trace the tool calls that resulted from an unexpected prompt

- Regression testing - compare timelines between runs to identify behavioral changes

- Training data generation - export successful agent traces for fine-tuning

Capability Grants

Runtime capability grants allow operators (or parent agents) to temporarily extend an agent's capabilities beyond what is declared in its manifest, without requiring a restart.

Overview

Grants are temporary: they expire at a configurable time or when explicitly revoked. Every grant is logged in the audit trail.

Listing Grants

ash grants list <agent-id>

Output:

GRANT-ID CAPABILITY GRANTED-BY EXPIRES

f1e2d3c4-... fs.write:/tmp/** operator 2026-02-22T13:00:00Z

Revoking a Grant

ash grants revoke <agent-id> <grant-id>

The grant is immediately removed and the agent loses the capability.

How Grants Are Issued

Grants are typically issued through the escalation hierarchy:

- Agent calls a tool and receives

AccessDenied - Agent (or parent) initiates a grant request via IPC

- Parent agent or operator approves the request

agentdcreates aCapabilityGrantfor the agent with the requested capability and expiry- Subsequent tool calls succeed until the grant expires or is revoked

Operators can also issue grants directly via the ash CLI or IPC.

Capabilities That Can Be Granted

Any valid capability string can be granted at runtime:

fs.read:/tmp/report.pdf

fs.write:/tmp/**

tool.invoke:web.search

secret.use:temp-key

Grants are additive; they extend the manifest's capability set for the duration of the grant.

Audit Trail

Every grant issuance and revocation appears in the audit log:

{"action": "capability_granted", "capability": "fs.write:/tmp/**", "agent_id": "...", "granted_by": "operator"}

{"action": "capability_revoked", "capability": "fs.write:/tmp/**", "agent_id": "...", "revoked_by": "operator"}

Security Considerations

- Grants cannot exceed the agent's trust level ceiling

- Expired grants are automatically cleaned up by the daemon

- A

privilegedtrust-level agent cannot be granted capabilities outside whatprivilegedallows - Grants to

untrustedagents are limited to theuntrustedcapability set

Secrets Overview

The Sealed Credential Store manages sensitive values (API keys, passwords, tokens) across the full lifecycle: encrypted on disk, decrypted on demand, and scrubbed before they re-enter agent context.

Architecture

The store is composed of four layers:

| Component | Role |

|---|---|

SecretStore | Heap-only runtime store. Values held as Zeroizing<String>. All resolve/substitute calls return StoreLocked while locked. |

EncryptedSecretStore | SQLite-backed persistence. Secret values encrypted with AES-256-GCM; master key derived from a passphrase via Argon2id. KDF salt stored in a kdf_params table. |

SecretPolicyStore | SQLite-backed pre-approval policy store. Policies survive daemon restarts. |

SecretGrantStore | Ephemeral in-memory per-agent delegation grants. Grants expire on daemon restart by design. |

Design Goals

- Encrypted at rest: Secret values are encrypted with AES-256-GCM before being written to SQLite. The master key is derived from a passphrase (Argon2id, 64 MiB memory cost) and held in

Zeroizing<[u8; 32]>- zeroed on drop. - Opaque handles: Agents reference secrets as

{{secret:<name>}}in tool arguments; the daemon substitutes the plaintext only inside the tool dispatch layer. - Output scrubbing: Tool results are scanned for secret values before they are returned to the agent; matches are replaced with

[REDACTED:<name>]. - Pre-approval policies: Secret resolution requires an active policy matching the (secret, tool, host) triple - no open-ended blanket access.

- Audit trail: Every secret use is recorded with the matching policy ID.

- Canary tokens: Fake secrets embedded in the store detect exfiltration attempts.

Security Properties

| Property | Guarantee |

|---|---|

| Disk persistence | Values encrypted with AES-256-GCM; master key never written to disk |

| In-memory | Values held as Zeroizing<String>; zeroed by the allocator on drop |

| IPC responses | Values never appear in ash output or IPC responses |

| LLM context | Values scrubbed before re-entering LLM context |

| Agent memory | Agents never see plaintext; only {{secret:<name>}} handles are visible |

| Kernel | Secrets are not in environment variables (not in /proc/<pid>/environ) |

| Locked state | All resolve/substitute calls return StoreLocked until ash secrets unlock succeeds |

Lock / Unlock Lifecycle

First run:

ash secrets unlock # prompts for passphrase → init_passphrase() → store ready

Daemon restart:

ash secrets unlock # prompts for passphrase → derive_key() + load_all() → store ready

While running:

ash secrets lock # zeroize all in-memory plaintext; subsequent uses return StoreLocked

ash secrets unlock # re-derive key and reload from encrypted store

Key rotation:

ash secrets rekey # prompts for old + new passphrase; re-encrypts all blobs atomically

On first run (no KDF params in the database yet), ash secrets unlock automatically calls init_passphrase() to create a fresh encrypted store with the supplied passphrase. On subsequent starts it calls derive_key() against the stored salt.

Workflow

1. Operator unlocks / initialises the store:

ash secrets unlock

(prompted for passphrase; initialises on first run, reloads on restart)

2. Operator registers a secret:

ash secrets add my-api-key

(value read via echo-off prompt; persisted encrypted to SQLite if store is unlocked)

3. Operator creates a pre-approval policy:

ash secrets policy add \

--label "API access" \

--secret "my-api-key" \

--tool "web.fetch" \

--host "api.example.com"

4. Agent uses secret in a tool call:

{"url": "https://api.example.com/v1", "headers": {"Authorization": "Bearer {{secret:my-api-key}}"}}

5. agentd:

a. Finds active policy matching (my-api-key, web.fetch, api.example.com)

b. Substitutes plaintext in the tool input (never logged)

c. Calls the tool handler

d. Scrubs the tool output before returning to agent

e. Writes audit entry: "secret_used: my-api-key via web.fetch (policy: <uuid>)"

Declaring Secret Access in a Manifest

spec:

capabilities:

- secret.use:my-api-key

- secret.use:db-* # glob: all secrets starting with "db-"

secret_policy:

- label: "API access"

secret_pattern: "my-api-key"

tool_pattern: "web.fetch"

host_pattern: "api.example.com"

The secret_policy section in the manifest creates pre-approval policies automatically at spawn time. This enables unattended operation without requiring a separate ash secrets policy add step.

Registering Secrets

Secrets are registered with agentd via ash. The value is read via an echo-disabled prompt; it is never shown on screen or written to shell history.

Initialising the Store

Before registering secrets, the encrypted store must be unlocked. On first run this also initialises the store with your chosen master passphrase:

ash secrets unlock

# Enter master passphrase: (input hidden)

# Secret store initialised and unlocked.

On subsequent daemon restarts, the same command reloads all previously persisted secrets:

ash secrets unlock

# Enter master passphrase: (input hidden)

# Secret store unlocked. 3 secret(s) loaded.

Adding a Secret

ash secrets add my-api-key

# Enter value for 'my-api-key': (input hidden)

# Secret 'my-api-key' registered.

With an optional description:

ash secrets add openrouter-key --description "OpenRouter API key for LLM access"

The name you choose here is the identifier used in:

- Capability declarations:

secret.use:openrouter-key - Handle syntax in tool arguments:

{{secret:openrouter-key}} - Pre-approval policies:

--secret "openrouter-key"

If the store is unlocked (i.e. an encryption key is active), the value is also encrypted and persisted to the SQLite store immediately.

Listing Secrets

ash secrets list

# my-api-key

# openrouter-key

# db-password

Only names are shown; values are never displayed.

Removing a Secret

ash secrets remove my-api-key

# Secret 'my-api-key' removed.

Removing a secret wipes the plaintext from heap memory and deletes the encrypted blob from the SQLite store. Any pending tool calls using that handle will fail with secret not found.

Locking and Unlocking

# Lock: immediately zeroize all in-memory plaintext.

ash secrets lock

# Unlock: re-derive key from passphrase and reload from encrypted store.

ash secrets unlock

While the store is locked, all {{secret:<name>}} handle substitutions return StoreLocked. Use ash secrets lock before handing off a session or stepping away from a running daemon.

Rotating the Master Key

ash secrets rekey

# Enter current passphrase: (input hidden)

# Enter new passphrase: (input hidden)

# Confirm new passphrase: (input hidden)

# Master key rotated. All secrets re-encrypted.

rekey re-encrypts every secret blob in a single atomic SQLite transaction. The daemon remains fully operational throughout; no secrets need to be re-registered.

Naming Conventions

Choose names that are:

- Lowercase, kebab-case:

openrouter-key,db-password,analytics-api-key - Descriptive of the service, not the value:

stripe-keynotsk_live_abc123 - Consistent with the glob patterns you will use in policies:

db-*matchesdb-password,db-read-key, etc.

Secret Lifetime and Persistence

Secrets are encrypted and persisted to SQLite whenever the store is unlocked. On daemon restart, run ash secrets unlock with the master passphrase to restore all registered secrets automatically, with no re-registration required.

The SQLite database path is controlled by the AGENTD_ENCRYPTED_SECRETS_DB environment variable (default: /tmp/agentd_secrets.db). For production deployments, point this at a path on an encrypted filesystem.

Note: Per-agent delegation grants (

ash secrets grant) are intentionally ephemeral; they live only in the daemon's memory and must be re-granted after a restart.

Pre-Approval Policies

Every secret use requires an active pre-approval policy that matches the (secret, tool, host) triple. Without a matching policy, the request is denied, even if the agent has the secret.use:<name> capability.

Policies answer the question: "Is this agent allowed to use secret X with tool Y to reach host Z?"

Policies are persisted to SQLite and survive daemon restarts. No re-registration is required after restarting agentd.

Creating a Policy

ash secrets policy add \

--label "OpenRouter API access" \

--secret "openrouter-key" \

--tool "web.fetch" \

--host "openrouter.ai"

All fields:

ash secrets policy add \

--label "Nightly analytics batch" \

--secret "analytics-api-key" \ # glob: which secrets

--tool "web.fetch" \ # glob: which tools

--host "api.analytics.example.com" \ # optional: which host

--expires "2026-12-31T00:00:00Z" \ # optional: expiry

--max-uses 100 \ # optional: use limit

--trust-level "trusted" # optional: agent trust level floor

Policy Fields

| Field | Type | Description |

|---|---|---|

--label | string | Human-readable name for audit entries |

--secret | glob | Matches secret names (openai-*, db-password, *) |

--tool | glob | Matches tool names (web.fetch, sandbox.exec, *) |

--host | glob | Optional. Matches destination host for network tools |

--expires | RFC3339 | Optional. Policy stops applying after this time |

--max-uses | integer | Optional. Policy disabled after N auto-approvals |

--trust-level | level | Optional. Only applies to agents at this trust level or above |

Listing Policies

ash secrets policy list

# ID LABEL SECRET TOOL EXPIRES

# a1b2c3d4-... OpenRouter API access openrouter-* web.fetch never

# e5f6a7b8-... Nightly analytics batch analytics-* web.fetch 2026-12-31

Inspecting a Single Policy

ash secrets policy show a1b2c3d4-e5f6-...

# ID: a1b2c3d4-e5f6-...

# Label: OpenRouter API access

# Secret: openrouter-*

# Tool: web.fetch

# Host: openrouter.ai

# Expires: never

# Max uses: unlimited

# Use count: 42

# Created by: operator

# Created at: 2026-01-15T10:00:00Z

Removing a Policy

ash secrets policy remove <policy-id>

Immediately revokes the policy. Subsequent secret resolutions that would have matched this policy are denied.

Manifest-Declared Policies

Policies can be declared in the manifest so they are applied automatically at spawn time:

spec:

capabilities:

- secret.use:openrouter-key

secret_policy:

- label: "LLM completions"

secret_pattern: "openrouter-key"

tool_pattern: "web.fetch"

host_pattern: "openrouter.ai"

max_uses: 1000

Manifest-level policies are scoped to the specific agent spawned from that manifest. Runtime policies created via ash secrets policy add can optionally be scoped by agent_matcher.

Policy Matching

When an agent uses a secret handle in a tool call, agentd checks all active policies for a match:

- Does

secret_patternmatch the secret name? - Does

tool_patternmatch the tool being invoked? - If

host_patternis set, does it match the destination host in the request? - Is the policy not expired?

- Is the policy under its

max_useslimit? - If

agent_matcheris set, does the calling agent match?

If all matching conditions pass, the policy approves the use, increments its use_count, and records the policy ID in the audit trail.

If no policy matches, the request is denied with NoPolicyMatch and the event is logged as a warning. Full HITL queue routing for unmatched requests is planned for a future phase.

Using Secrets in Agents

Agents never see secret values. They reference secrets by name using the handle syntax, and the daemon substitutes the plaintext at dispatch time.

Handle Syntax

{{secret:<name>}}

Use handles anywhere in tool call JSON arguments:

{

"url": "https://api.openai.com/v1/chat/completions",

"headers": {

"Authorization": "Bearer {{secret:openai-key}}"

}

}

{

"url": "https://api.example.com/data?key={{secret:api-key}}"

}

The daemon:

- Parses the tool input JSON

- Finds all

{{secret:<name>}}occurrences - Checks the agent has

secret.use:<name>capability - Finds an active pre-approval policy matching (name, tool, host)

- Substitutes the plaintext inline in the tool input (only for the handler, never logged)

- Calls the tool handler with the substituted input

- Scrubs the tool output for any secret values before returning to the agent

Manifest Declaration

Declare which secrets an agent may use:

spec:

capabilities:

- secret.use:openai-key

- secret.use:db-* # glob: any secret starting with "db-"

SDK Usage

The Agent SDK does not need special handling for secrets; just pass the handle as a string in your tool input:

#![allow(unused)] fn main() { let result = agent.invoke_tool("web.fetch", json!({ "url": "https://api.openai.com/v1/models", "headers": { "Authorization": "Bearer {{secret:openai-key}}" } })).await?; }

If the secret is not registered, the capability is missing, or no policy matches, the call returns a ToolFailed error describing why.

Output Scrubbing

After the tool handler returns, agentd scans the result for any registered secret values. If found, they are replaced with [REDACTED:<name>]:

{"body": "Error: invalid token [REDACTED:openai-key]"}

This prevents accidental leakage into the agent's LLM context.

sandbox.exec and Secrets

The sandbox.exec tool supports injecting secrets as environment variables in the sandboxed process:

{

"runtime": "sh",

"code": "curl -H \"Authorization: Bearer $OPENAI_KEY\" https://api.openai.com/v1/models",

"secrets": {

"OPENAI_KEY": "{{secret:openai-key}}"

}

}

The environment variable is set for the child process only. It does not appear in tool logs.

Handle Syntax

Secret handles use a simple template syntax embedded in JSON strings.

Format

{{secret:<name>}}

{{and}}are the delimiterssecret:is the literal prefix<name>is the exact name used when registering the secret withash secrets add

Valid Examples

{{secret:openai-key}}

{{secret:db-password}}

{{secret:analytics-api-key-prod}}

Placement in Tool Input

Handles can appear:

- As the entire value of a JSON string field

- As a substring within a JSON string field (embedded in a URL, header value, etc.)

{

"api_key": "{{secret:stripe-key}}",